However now we got enough feedback, its time to reveal what we done to make it work. Theres a blog post coming soon on the BBC R&D blog but till then… Happyworm have done a excellent blog post explaining the whole thing down to some serious detail, including how to reveal the secret easter egg/control panel!

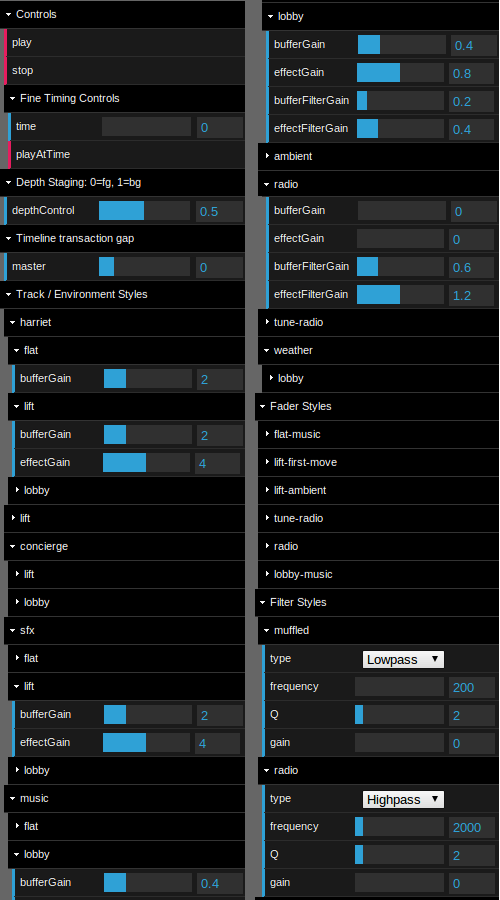

To open the Easter Egg, Breaking Out must have finished loading and then click under the last 2 of the copyright 2012 on the bottom right. You’ll then have access to the Control Panel.

The easter egg, really unlocks the power of Perceptive Media like never before.

Everything is controllable and the amount of options is insane but all possible with the power of object based audio (the driving force behind perceptive media).

Practically just changing the fade between foreground and background objects can be a massive accessibility aid for those hard of hearing or in a noisy environment like driving a car? Tony Churnside is working on the advantages of object based audio so i won’t even try coming with conclusions on whats possible but lets say, the whole turning your sound system up and down to hear the dialogue could be removed with Perceptive media. Because of course perceptive media isn’t just the objects and delivering the objects, its also the feedback and sensor mechanisms.

Mark Panaghiston writes in conclusion…

The Web Audio API satisfied the goals of the project very well, allowing the entire production to be assembled in the client browser. This enabled control over the track timing, volume and environment acoustics by the client. From an editing point of view, this allowed the default values to be chosen easily by the editor and have them apply seamlessly to the entire production, similar to when working in the studio.

Web Audio API was amazing… and we timed it just about right. At the start of the year, it would not have worked in any other browser except Chrome. But every few months we saw other browsers catch up in the WebAudioAPI front and I’m happy to say the experiment kinda of works on Firefox and Opera.

One of the most complicated parts of the the project was arranging the asset timelines into their absolute timings. We wanted the input system to be relative since that is a natural way to do things, “Play B after A”, rather than, “Play A at 15.2 seconds and B at 21.4 seconds.” However, once the numbers were crunched, the

noteOnmethod would easy queue up the sounds in the future.The main deficiency we found with the Web Audio API was that there were no events that we could use to know when, for example, a sound started playing. We believe this is in part due to it being known when that event would occur, since we did tell it to

noteOnin 180 seconds time, but it would be nice to have an event occur when it started and maybe when its buffer emptied too. Since we wanted some artwork to display relative to the storyline, we had to use timeouts to generate these events. They did seem to work fine for the most part, but having hundreds of timeouts waiting to happen is generally not a good thing.

Yes ideally we would want to be able to turn a written script into a Javascript file complete with timings. Its something which would make perceptive media a lot more accessible to narrative writers.

And finally, the geo-location information was somewhat limited. We had to make it specific to the UK simply because online services were either expensive or heavily biased towards sponsored companies. For example, ask for the local attractions and get back a bunch of fast food restaurants. But in practice though, you’d need to pay for a service such as this and this project did not have the budget.

Yes that was one of the limiting factors which we had to do for cost. And because of that we couldn’t shout about it from the roof tops to the world. However the next experiment/prototype will be usable worldwide, just so we can talk about perceptive media on a global stage if needed

As Harriet said, “OK, I can do this.” And we did!

Yes we did! and we proved Perceptive Media can work and what a fine achievement it is! This is why I can’t shut up about Perceptive Media. When ever we talk about the clash of interactivity and narrative I can’t help but pipe up about Perceptive Media, and why not? It could be the next big thing and I have to thanks James Barrett for coming up with the name after I had originally called it the less friendly Intrusive Media.

Not only did we prove that but it also proved that things off the work plan in R&D can be as valid as things on it. And finally that the ideology of looking at whats happening on the darknet, understanding it and thinking about how it can scale has also been proven…

I love my job and love what I do…

Happyworm were a joy to work with and the final prototype was not only amazing but they also believed into the ideals of open sourcing the code so others can learn, understand and improve on it. You should Download Perceptive Media at GitHub and have a play if you’ve not done so yet… what you waiting for?